I shipped Flutter apps with Cursor for over a year. Six months ago I switched my daily driver to Claude Code, and I'm not going back.

This is the article I wish I had read before making the switch — what changes, what breaks, and how to set up a real Flutter workflow around an agentic CLI instead of an IDE.

What "vibe coding" actually means

Vibe coding — coined by Andrej Karpathy in early 2025 — is a way of building software where you describe intent in plain language and let an AI agent translate it into code. You stop writing every line yourself and start reviewing, redirecting, and shipping. You're not the typist anymore. You're the co-pilot.

I won't redo the full definition here — I covered it in the Cursor + Flutter setup article. What matters for this piece is that the tool you vibe-code with matters as much as the model behind it. Cursor and Claude Code share the philosophy but diverge hard on execution.

Why I switched from Cursor to Claude Code

Cursor was the first AI agent I worked with, and for months it did the job. But the deeper I pushed into complex Flutter apps, the more one issue surfaced: Cursor doesn't own a model. It routes your prompts across whatever third-party models keep inference cheap — GPT, Claude, Gemini, sometimes others.

That's fine when you're scaffolding a screen or asking for a regex. It breaks down the moment context, multi-step refactors, or subtle code generation start to matter.

Each model has its quirks. One handles tool calls cleanly. Another writes better Dart but forgets your folder conventions halfway through a refactor. A third hallucinates Riverpod APIs from 2022. When the underlying model swaps between sessions, or even inside a single session, you can't build a stable mental model of what the agent will actually do.

Sticking to a single model changes everything.. Your prompts compound: you learn how the model interprets edge cases, where it needs hand-holding, where you can trust it to run loose.

The power of reasoning models

This is where Claude Code pulled ahead for me — and honestly, I didn't expect it.

Reasoning models are LLMs trained to think before they answer. Instead of streaming the first plausible response, they run an internal chain of intermediate steps — exploring options, ruling out dead ends, validating their own logic — before producing the final output. You don't see the reasoning in the answer; you just notice the answer is more grounded, more correct, and less likely to gloss over the edge case that would have bitten you in production.

For Flutter devs, reasoning earns its keep in three places:

- Architectural decisions. "Should this be a Riverpod

Notifieror aStateNotifier? What about the offline case?" A non-reasoning model picks the first plausible answer. A reasoning model walks through your existing patterns, weighs trade-offs against yourCLAUDE.mdrules, and proposes the option that actually fits the codebase. - Multi-file refactors. Renaming a domain entity used across 14 files, three feature modules, and a generated

freezedclass — the reasoning model plans the change before touching code. Fewer surprises, fewer broken imports, fewer 30-minute rebuild loops. - Debugging non-obvious failures. "Why does my golden test fail only on iOS in CI?" A reasoning model compares symptoms across layers — Flutter version, font fallbacks, golden file generation, timezone — instead of guessing one cause and committing to it.

Claude Code exposes this through extended thinking: you can dial the reasoning budget up when the task warrants it, and down when you just want a quick edit.

Setup: getting Claude Code running in a Flutter project

Claude Code is a CLI, not an IDE. Installation is a single command — see the official Claude Code install docs for the latest. Once installed, you just run claude from any directory and you have an agent in your terminal.

What that means in practice for a Flutter project:

cd my_flutter_app

claudeThe mental shift: you stop thinking "how do I autocomplete this line?" and start thinking "what task do I want done?". You give Claude the goal — "add a paywall screen using our Riverpod paywall notifier and link it from onboarding step 4" — and it edits multiple files, runs the tests, and reports back.

Or use the VS Code Claude extension

Honestly, the bare terminal isn't how I work day-to-day. Most of the time I run Claude Code through the VS Code extension.

I prefer this setup because I can keep editing and reviewing code manually while an agent works on a separate task in another panel.

CLAUDE.md: the heart of the workflow

If you take one thing away from this article, it's this: Claude Code is only as good as your CLAUDE.md file.

CLAUDE.md lives at the root of your project. Claude reads it at the start of every session and treats it as binding context — architecture rules, conventions, gotchas, the things you'd onboard a new developer with. Without it, Claude writes generic Flutter code. With it, Claude writes your Flutter code.

A good CLAUDE.md for a production Flutter app covers at least:

- Architecture — 3-layer pattern (data / domain / presentation), where each lives, what depends on what.

- State management — Riverpod-first, when to use

NotifiervsProvider, naming conventions. - Routing — GoRouter setup, redirect guards, transition patterns.

- Tests — golden tests, widget tests, where mocks live, what not to mock.

- Project gotchas — "Don't use

size-NTailwind classes" in our landing CLAUDE.md, or "Generatedfreezedfiles live in this folder, don't edit by hand" in a Flutter app. The non-obvious stuff that breaks builds. - Commit conventions — what the agent should put in commit messages.

Pro tip: keep CLAUDE.md as small as possible. Every line in it gets injected into every request — that adds up fast in tokens and cost. Instead of cramming everything into the root file, reference companion docs from inside it (@docs/architecture.md, @docs/testing.md, @docs/conventions.md). Claude loads them only when the context calls for it, not on every single prompt. A lean CLAUDE.md that points to deeper docs beats one that tries to carry everything itself.

Writing a good CLAUDE.md from scratch takes time. That's why ApparenceKit ships pre-configured Claude rules — the architecture, conventions, and Flutter-specific gotchas are encoded for you, so Claude Code generates code that fits the 3-layer architecture from prompt one. You can extend them with your own project rules; you don't start from a blank file.

If you want to understand the underlying concept first, the AI rules tip breaks down why custom rules matter and how to structure them.

Workflows where Claude Code shines for Flutter

Claude is really good at writing Flutter code. I've been seeing really better results writing flutter code than native code. This is certainly due to the fact that Flutter has this mindset of "everything is a widget".

Six months in, here are the patterns I now reach for daily:

1. Adding a complete feature, not just a screen

I love to plan my feature before letting claude or any AI writes any code. It's important to see if AI understands the feature and the architecture of the app before it starts writing code. If it doesn't respect my architecture I have to explain or improve my rules.

💡 Keep improving your rules. It's important to think of your CLAUDE.md as a living document that evolves with your codebase. Whenever you find yourself correcting the agent on the same point, add it to the rules.

Claude plans the change (data layer repository, domain entity, presentation notifier and widget), creates the files, wires the route, and runs flutter test to confirm nothing broke. It does in ~5 minutes what used to be a half-day of plumbing.

2. Refactoring a feature module

"Ex: The subscription module is leaking presentation logic into domain. Refactor it to put pure business rules in domain and move the UI state into a Riverpod notifier."

This is where reasoning earns its keep. Claude reads the module, identifies the boundary violations, proposes a plan, and only edits once you confirm.

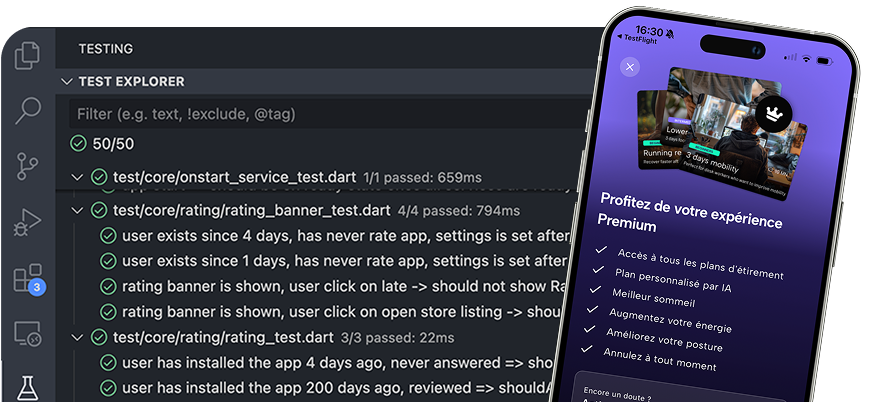

3. Writing golden tests at the end of a feature

I rarely write golden tests by hand anymore. "Generate golden tests for the new paywall screen across iPhone 15, Pixel 8, and a small device." Claude generates the tests, runs them with --update-goldens, and commits the baselines. See the unit test guide for the philosophy I want Claude to follow.

4. Debugging what should-be-impossible failures

"flutter build ipa fails with framework 'Flutter' not found. The Xcode log is attached. Find the root cause, don't paper over it."

Shell access plus reasoning makes Claude actually useful at debugging — it runs commands, reads logs, checks Podfile.lock, looks at the output, and reports a real diagnosis instead of guessing.

MCP servers worth installing for Flutter dev

MCP (Model Context Protocol) servers extend Claude Code with live access to external systems. The ones I actually use on Flutter projects:

- Supabase MCP — query your dev database from Claude. "Show me the schema of the

userstable" or "List the last 10 rows infeedbacks" without leaving the terminal. - Firebase MCP — same for Firestore / Firebase Auth. Read collection schemas, inspect security rules.

- Sentry MCP — pull recent crashes into context. "Why is this crash happening and where does it come from?" with the full stack trace already in the agent's context.

- GitHub MCP — inspect PRs, issues, recent commits. Useful when you want Claude to understand "what's been changed lately and why".

The pattern: MCP turns "agent that can read your filesystem" into "agent that can read your filesystem AND your live infrastructure". For a Flutter app where the bug is half client, half backend, that matters.

Claude skills

Skills are a more recent addition to Claude Code, and they've changed how I think about repeatable workflows.

A skill is a folder of Markdown instructions,sometimes paired with scripts or templates, that Claude loads automatically when the conversation calls for it.

To trigger a skill, you just type / and you will see all available skill. Claude can also trigger skills contextually based on the conversation.

Think of skills as specialized expert modes Claude can slip into. The description tells Claude when to use it: "Triggers on App Store screenshots, ASO copy, or store descriptions", or "Triggers when the user asks for a Flutter responsive layout".

The one I lean on the most is an ASO skill. App Store Optimization is its own discipline — keyword research, screenshot framing, app store description structure, A/B testing hooks. With the skill in place, the moment I ask "draft the App Store description for the paywall feature", Claude pulls in the playbook automatically: benefit-led first line, keywords without stuffing, 170-character preview limit.

I don't re-explain the rules every release.

Skills can live inside your project (.claude/skills/) so the whole team shares them, or globally for personal use.

Claude commands

Commands are the explicit cousin of skills. Where a skill is loaded contextually, a command fires when you type its name: /release, /translate, /review. They live in .claude/commands/ as Markdown files — each file is a reusable prompt with optional arguments.

Example: /translate. My Flutter app uses slang for internationalization, with translation strings in JSON files per locale. The command finds new keys I added in the base locale, translates them into the other locales (with project-specific terminology rules), writes the results to the right files, and runs dart run slang to regenerate the type-safe accessors. One command, end-to-end.

A few others I keep around:

/release— bumps the pubspec version, runs tests, builds the archive, drafts the changelog./golden-update— regenerates golden files only for the modules I touched./review— reviews the current diff against my rules and flags violations before I commit.

Each one is a few dozen lines of Markdown. Tiny investment, huge time saved per repeat.

Commands can also be used by your agent.

For example you asked to write a new feature, and at the end you have to translate the texts.

Claude will simply call the /translate command at the end of the feature implementation, without you having to remember to do it.

Commands vs skills

| Command | Skill | |

|---|---|---|

| Triggered by | You typing /name |

Claude inferring relevance |

| Best for | Repeatable, well-defined procedures | Specialized knowledge for a domain |

| Example | /release, /translate |

ASO writing, performance audit |

| Token cost | Cheap (loaded on call only) | Slight overhead (description always considered) |

Commands automate, skills specialize. If the task is "do this exact procedure", write a command. If the task is "act like an expert in X", write a skill. They compose well — a command can invoke a skill mid-flow when it needs domain expertise.

Limits and gotchas

Claude Code isn't magic. Six things that bit me:

- Cost adds up. Agentic workflows burn tokens fast. A multi-file refactor that runs tests can cost real money. Keep an eye on usage, and use shorter prompts when the task is small.

- Long sessions drift. Beyond a few hours, the context fills up with old tool calls and stale state. Start a fresh session for each meaningful task instead of one mega-session per day.

- Don't let it loose without rules. A

CLAUDE.md-less project will get generic code that doesn't match your patterns. Spend the time on the rules. - Verify destructive actions. Claude can run shell commands. Always review

git reset,rm, or anything that touchespubspec.lockbefore approving. - Generated code still needs review. Reasoning helps, but it's not a substitute for reading the diff. Treat Claude like a fast, mostly-correct junior, not an oracle.

- The model updates. Anthropic ships new Claude versions regularly (Sonnet 4.5 → 4.6 → 4.7 over 2025–2026). Behavior changes between versions, usually for the better, but it's worth re-testing your prompts after major bumps.

Ship faster with pre-configured Claude rules

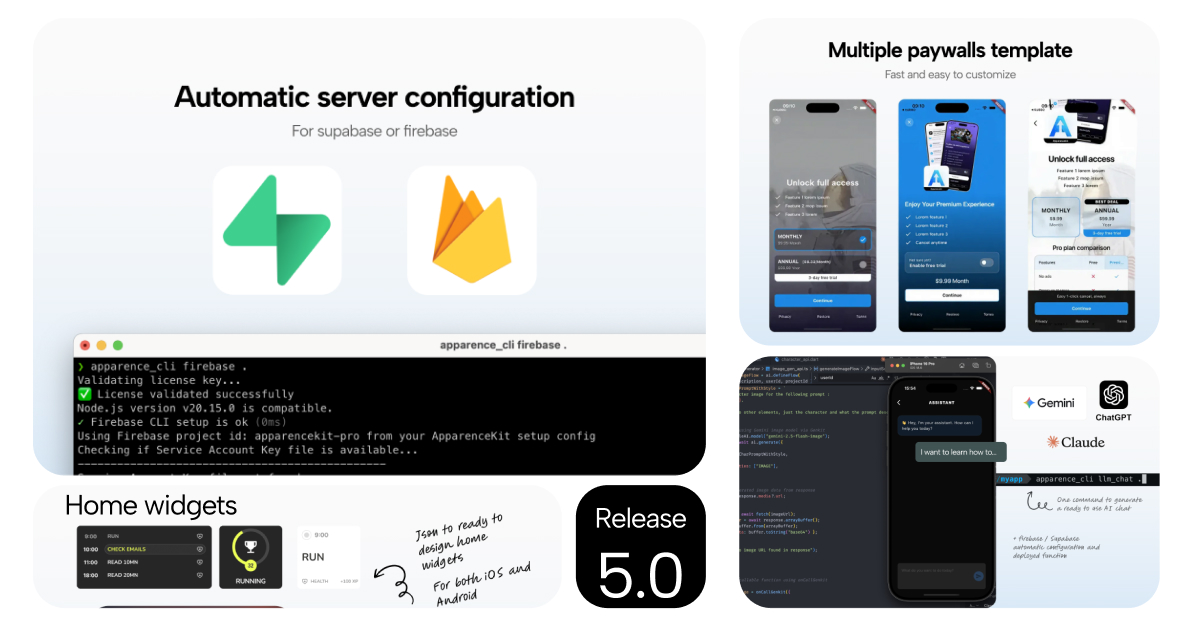

If you're starting a new Flutter app and you want Claude Code to be productive on day one, you have two options: write your own CLAUDE.md from scratch (a few weeks of iteration), or start from a Flutter starter kit with Claude rules already encoded.

ApparenceKit ships with:

- Pre-configured

CLAUDE.mdaligned with the 3-layer architecture, Riverpod state management, and Flutter conventions. - Cursor rules for the same project, so your team can use either tool.

- AI Chat module if you're building an LLM feature into your app, not just using one to write it.

You own the rules — they're plain Markdown in your project, fully editable. The baseline saves you the weeks of prompt-engineering it takes to get an agent to actually fit your codebase.

TL;DR

- Cursor and Claude Code share the vibe coding philosophy but diverge on execution: IDE autocomplete vs CLI agentic workflows.

- Owning the model matters. Claude Code's consistency lets your

CLAUDE.mdrules and prompts compound across sessions. - Reasoning models turn the agent from a fast typist into something that can actually plan a refactor or debug a CI failure.

CLAUDE.mdis the multiplier. A good rules file is the difference between generic Flutter code and code that fits your architecture.- MCP servers extend the agent's context to your live infrastructure — database, Flutter... — making it useful for debugging and data-driven tasks.

- Skills and commands let you encode repeatable workflows and expert knowledge for even more automation.

- Keep your general rules lean and offload details to companion files to save tokens and cost.